What Business Leaders Need to Know

- Small Language Models (SLMs) outperform general Large Language Models (LLMs) for many enterprise tasks while being faster and less expensive.

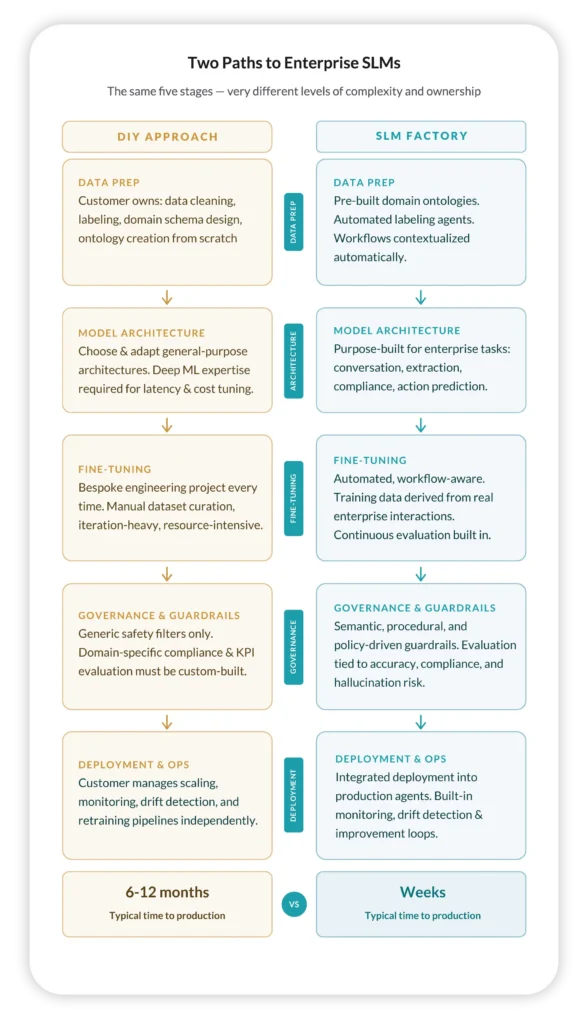

- Building SLMs internally from scratch is difficult — requiring data labeling, domain ontologies, model tuning, evaluation, and governance, with many teams spending 6–12 months building infrastructure before seeing results.

- Leaders don’t want to build AI infrastructure — they want AI-driven business outcomes.

- A turnkey SLM Factory provides the full lifecycle: data prep, fine-tuning, governance, deployment, and monitoring.

- The result: production-ready SLMs in weeks instead of months without building the stack yourself.

See why enterprise AI leaders are choosing a turnkey approach for Small Language Models (SLMs) over building their own.

There’s a pattern playing out across enterprise AI programs right now. A CTO or VP of AI greenlights a Small Language Model (SLM) initiative. Six months go by, and the team has cloud credits, GPU access, and talented ML engineers — but the model still isn’t in production.

Sound familiar?

The promise of SLMs is real: smaller, faster, less expensive models, laser-focused on a business’s own context rather than trying to know everything. Unlike their massive Large Language Model (LLM) counterparts, SLMs can be owned, governed, and optimized entirely by your organization. For enterprise use cases like conversation understanding, compliance detection, workflow automation, and action prediction, Small Language Models consistently outperform general-purpose models at a fraction of the cost.

But there’s a critical distinction that often gets glossed over in the initial excitement: building SLMs is itself a massive engineering undertaking. And for most enterprises, that hidden complexity is exactly what’s delaying value.

This is where the concept of an SLM Factory becomes important. An SLM Factory is an industrialized, autonomous platform for continuously creating fine-tuned, domain-specific models — powered by your enterprise data, context, and processes.

Rather than assembling and operating your own model-building stack from scratch, an SLM Factory gives you the entire pipeline as a single, integrated system. The question for most enterprise AI leaders isn’t whether to use one; it’s whether to build it yourself or take advantage of a purpose-built system.

The DIY Model-Building Trap

When enterprises decide to build Small Language Models internally, they typically have access to powerful cloud infrastructure — compute, storage, training orchestration, model registries. The tooling is excellent. The problem isn’t the tools.

The problem is everything the tools don’t do for you.

Before you ever train a model, you need to design domain ontologies and taxonomies from scratch, essentially teaching the model what your business means when it says things. You need to clean, label, and structure enormous volumes of enterprise data in a way that’s relevant to the specific tasks you’re targeting. You need ML architects who can select and adapt model architectures for your latency and cost requirements. You need evaluation frameworks tied not to academic benchmarks but to your actual business KPIs.

And that’s just to get to training.

Once you’re in the training loop, you’re managing fine-tuning pipelines, debugging data quality issues, iterating on results, and hoping your in-house domain experts and ML engineers are speaking the same language. After deployment, you’re on the hook for monitoring, drift detection, retraining pipelines, and keeping guardrails updated as your business evolves.

For organizations with large, dedicated AI platform teams, this is feasible — though still slow. For everyone else, it means months of engineering work before a single business outcome is delivered.

“They don’t want to be in the business of building model factories. They want to be in the business of deploying intelligent workflows that drive measurable outcomes.”

What Enterprise AI Leaders Actually Need to Deliver Measurable Outcomes

The most forward-thinking AI leaders we work with have arrived at the same conclusion: they don’t want to be in the business of building model factories. They want to be in the business of deploying intelligent workflows that drive measurable outcomes.

That reframing matters enormously. It shifts the question from “how do we build and operate an SLM pipeline?” to “how do we get accurate, governed, production-ready SLMs into our workflows as fast as possible?”

Those are very different problems, and they call for very different solutions.

A Turnkey Approach: The SLM Factory Model

At Uniphore, we’ve built what we call an SLM Factory — a fully integrated, end-to-end platform that handles the entire lifecycle from raw enterprise data to production-deployed models, purpose-built for enterprise workflows.

Here’s what that means in practice across each stage of the SLM lifecycle:

Data Preparation Without the Data Engineering Sprint

Rather than asking your team to build domain schemas and label datasets from scratch, the SLM Factory uses domain-aware ontologies that are pre-built and extensible for enterprise contexts. Conversations, documents, and workflows are automatically segmented and contextualized. Automated data agents dramatically accelerate discovery, labeling, and transformation.

The result: you start with better signal quality on day one, without a months-long data engineering project as a prerequisite.

Models Designed for Business Operations, Not Experimentation

General-purpose model architectures require significant adaptation to perform well on specific enterprise tasks. Uniphore’s SLM architectures are purpose-built for the kinds of tasks that actually matter in enterprise workflows: conversation understanding, extraction, compliance detection, and action prediction. Critically, we don’t stop at generic enterprise tasks. We maintain domain-specific models tailored for billing, insurance, back-office operations, claims processing, collections & disputes, customer onboarding, and compliance & audit, so your models arrive with deep contextual knowledge of your industry, not just language in general. They come pre-optimized for production-grade latency and cost, not tuned for research benchmarks.

Fine-Tuning as a Repeatable Process, not a Bespoke Project

Fine-tuning in a DIY environment tends to be a one-time heroic effort — a bespoke project that requires constant re-engineering as your data and workflows evolve. The SLM Factory makes fine-tuning a repeatable, automated process. Training data is derived directly from your enterprise workflows. Evaluation runs continuously during training. Guardrails and constraints are baked into the training lifecycle, not bolted on afterward.

Governance and Guardrails Built In by Design

This is where the gap between generic tooling and enterprise-ready platforms is widest. General-purpose safety filters are a starting point, not a solution. Enterprise AI requires semantic guardrails, procedural constraints, and policy-driven evaluation that maps directly to your compliance and operational requirements.

In the SLM Factory, governance isn’t a layer you add; it’s embedded throughout. Evaluation is tied to enterprise KPIs: accuracy, hallucination risk, compliance alignment, and workflow fit. The result is AI behavior that is predictable, auditable, and defensible in production.

From Model to Production in Weeks, Not Months

Perhaps the most tangible difference: time to value. DIY SLM programs routinely take six to 12 months to reach production. With the SLM Factory, deployment into production-grade AI agents is a single integrated step — not a separate engineering workstream. Monitoring, drift detection, and continuous improvement loops are built in, so your operational overhead post-deployment is dramatically lower.

The Strategic Trade-Off

None of this means the DIY path is wrong for every organization. If you have a large, specialized AI platform team, deep ML expertise, and a mandate to build proprietary model infrastructure as a core competency, building your own factory can make sense.

But for many enterprise AI leaders, the cost of the engineering talent, the elapsed time before business impact, the risks of building the wrong architecture or training on poorly structured data aren’t just inconveniences. They’re competitive risks in a market moving quickly.

The SLM Factory approach doesn’t take away your ownership. You still own your models and your data. What it removes is the burden of building and operating the factory itself — so your team can focus on what matters: driving outcomes.

The Bottom Line

Small Language Models represent a genuine step forward for enterprise AI: more accurate, more cost-efficient, and more governable than general-purpose LLMs for high-volume business workflows. But realizing that value requires more than access to infrastructure and tools. It requires a factory. The question is whether you build it or buy it.

For enterprise leaders who need to move fast, reduce risk, and demonstrate business outcomes — not model engineering milestones — the answer is increasingly clear.

Explore Uniphore’s Business AI Cloud, which provides a turnkey SLM Factory purpose-built for enterprise workflows.

FAQs About Small Language Models (SLMs)

What are Small Language Models (SLMs)?

Small Language Models (SLMs) are an alternative to Large Language Models (LLMs) where SLMs can offer a solution that’s fast, efficient, and grounded in business context where LLMs may be too resource-intensive or otherwise unnecessary.

What is a turnkey SLM Factory approach?

An SLM Factory is a platform for continuously creating fine-tuned, domain-specific models — powered by your enterprise data, context, and processes. Rather than assembling and operating your own model-building stack from scratch, an SLM Factory gives you the entire pipeline as a single, integrated system.

How much time can my organization save by using a turnkey SLM Factory approach instead of building our own?

DIY SLM programs tend to take 6-12 months to reach production, whereas with the SLM Factory, deployment into production-grade AI agents is a single integrated step that can take weeks (with significantly lower operational overhead after deployment).

How does a turnkey SLM factory approach handle data governance and AI guardrails?

In the SLM Factory, governance is embedded throughout as evaluation is tied to the enterprise KPIs that matter: accuracy, hallucination risk, compliance alignment, and workflow fit. The result is AI behavior that is predictable, auditable, and defensible in production.

What kinds of organizations can benefit most from a turnkey SLM factory approach?

Any business wanting to deploy intelligent workflows that drive measurable outcomes without building model factories would benefit from a turnkey SLM factory approach.