Small Language Models (SLMs)

What Business Leaders Need to Know

Small Language Models (SLMs) are smaller, faster, less expensive models, laser-focused on a business’s own context rather than attempting to know everything. Unlike their massive Large Language Model (LLM) counterparts, SLMs can be owned, governed, and optimized entirely by your organization. For enterprise use cases like conversation understanding, compliance detection, workflow automation, and action prediction, Small Language Models consistently outperform general-purpose models at a fraction of the cost.

For enterprises, small language models offer a powerful alternative to massive AI systems: lower cost, faster inference, better privacy control, and easier deployment on private infrastructure. When trained for specific tasks, SLMs can often match or outperform larger models while using a fraction of the computing cost.

As organizations look for secure, sovereign, and cost-efficient AI, SLMs are becoming a core building block for enterprise AI architecture.

What are Small Language Models?

Small language models (SLMs) — also known as small LLMs — are AI models designed for natural language processing (NLP) tasks but built with significantly fewer parameters than traditional large language models.

A small language model is a natural language AI model that typically contains under ~30–70 billion parameters, and is optimized for efficiency, task specialization, and deployment on constrained infrastructure.

They are trained using similar techniques as LLMs — including transformer architectures and large datasets — but are optimized for efficiency, specialization, and deployment flexibility.

Key characteristics of SLMs

Compared to LLMs, small language models typically provide:

- Lower computational requirements

- Faster response times

- Lower inference costs

- Easier on-premise or edge deployment

- Greater control over data and security

Because of these advantages, SLMs are increasingly preferred for use in enterprise AI systems, agent architectures, and automation workflows compared to LLMs.

The SLM Revolution

How Small Models and RAFT Are Transforming Business AI

SLMs vs LLMs

| Feature | Small Language Models (SLMs) | Large Language Models (LLMs) |

|---|---|---|

| Parameters | Millions to tens of billions | Tens to hundreds of billions |

| Infrastructure | Can run on modest GPUs or CPUs | Requires large-scale GPU clusters |

| Latency | Low | Higher |

| Cost per inference | Low | High |

| Customization | Easier to fine-tune | Often harder and more expensive |

| Privacy control | Easier to run privately | Often cloud-based |

| Best use cases | Specialized enterprise tasks | General-purpose reasoning |

Large models excel at general intelligence and broad reasoning, but SLMs are often better at focused, repeatable enterprise tasks.

Small Language Models represent a different architectural philosophy: depth over breadth and precision over generalization. Instead of trying to know everything about everything, SLMs excel at knowing everything about something specific.

Recent research validates this approach empirically. Beyond the NVIDIA study, multiple research efforts demonstrate SLM effectiveness:

- Microsoft’s Phi-3 research shows 7B models matching 70B performance on reasoning tasks

- Google’s specialized model studies demonstrate task-specific models outperforming generalists

- Stanford’s analysis of model efficiency reveals diminishing returns at scale for specialized tasks

The SLM Advantage Matrix

| Dimension | LLMs (70B+) | SLMs (1B-13B) | Business Impact |

|---|---|---|---|

| Deployment Latency | 2-5 seconds | 50-200ms | 10x improvement in user experience |

| Infrastructure Cost | $50K-200K/month | $2K-10K/month | 90% cost reduction |

| Domain Accuracy | 70-85% | 90-98% | Reduced error rates, higher trust |

| Customization Speed | Weeks | Hours-Days | Faster time to market |

| Compliance Control | Difficult | Full Control | Regulatory requirements met |

| Energy Efficiency | High | Low | 95% reduction in carbon footprint |

Note: Fine-tuned SLMs outperform LLMs on most accuracy metrics except comprehensive response generation, where SLMs are optimized for focused, task-specific excellence rather than broad coverage.

Why Small Language Models Are Gaining Popularity

In the early days of generative AI, the dominant assumption was that bigger models always perform better. However, research and enterprise deployments increasingly show that smaller models can outperform large ones across different areas when optimized for specific tasks.

Several factors are driving this shift, such as:

Cost efficiency

Large models require expensive infrastructure and high inference costs. Small language models dramatically reduce these expenses.

For enterprises deploying AI at scale—across millions of interactions per day—SLMs can reduce AI operating costs by quite a bit.

Faster responsiveness

Because they are smaller, SLMs generate responses faster. This makes them ideal for real-time enterprise applications, and they deliver a 10x improvement in response latency with a lower customization speed than LLMs, for faster time to market.

Private deployment and security

Small language models can often run:

- On-premise

- In private clouds

- On edge devices

This helps organizations to maintain complete control and security over their sensitive data, a key requirement in regulated industries such as finance, healthcare, and government.

Task specialization

Small models can be trained or fine-tuned for highly specific tasks such as:

- Document classification

- Workflow automation

- Code generation for internal tools

- GUI navigation and automation

- Domain-specific question answering

When trained properly, task-optimized SLMs can outperform much larger models and provide numerous additional benefits to the organization.

Architecture of Small Language Models

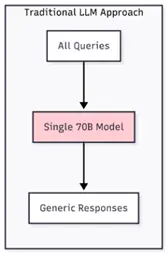

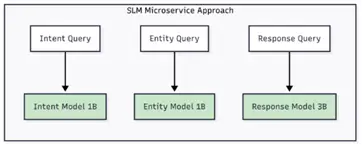

SLMs enable more elegant system architectures. Instead of routing all requests through a monolithic large model, you can deploy specialized models for different functions—like importing specific “kung-fu” skills in The Matrix, each model masters its domain perfectly:

This microservice approach for AI provides better observability, easier debugging, and more predictable scaling characteristics. The NVIDIA research found that analysis of popular open-source agents shows 40-70% of LLM queries could be reliably handled by appropriately specialized SLMs.

Most small language models use the same core architecture as large models: transformers. However, SLMs often incorporate additional optimization techniques.

Common techniques used in SLMs

Here’s the critical challenge that determines SLM success in production: how do you equip these specialized models with the domain knowledge they need to excel? This is where traditional Retrieval-Augmented Generation (RAG) falls short, and where Retrieval-Augmented Fine-Tuning (RAFT) approach provides the solution.

LoRA Integration for Simplified Fine-tuning

Our RAFT implementation leverages LoRA (Low-Rank Adaptation) techniques to make the fine-tuning process more efficient and manageable. Without LoRA, fine-tuning remains a labor-intensive exercise. By creating LoRA adapters and embedding them into the SLM, we make fine-tuning simpler, faster, and more cost-effective while maintaining the quality improvements that RAFT provides.

RAFT’s Competitive Advantages

- Latency: 50-200ms vs 1000ms+ for RAG

- Cost per query: 100X reduction compared to LLM infrastructure requirements

- Infrastructure complexity: Single model deployment vs retrieval pipeline

- Deeper understanding: Knowledge becomes part of model weights

- Better reasoning: Can make connections across internalized information

- Consistency: Same query always produces consistent responses

- Reliability: No external retrieval dependencies

- Scalability: Linear scaling with standard model serving

- Security: No sensitive data in external vector stores

Small Language Models for Enterprise AI

For enterprises, the key question is not simply “how powerful is the model?” but “how efficiently can it deliver business outcomes?”

Small language models excel in enterprise environments because they enable:

- Predictable costs

- Controlled infrastructure

- Faster deployment cycles

- Stronger security and governance

Enterprise use cases

Common enterprise applications for SLMs include:

- Workflow automation

Small models can orchestrate complex processes such as approvals, ticket routing, or knowledge retrieval. - Document processing

SLMs extract and summarize information from contracts, reports, and internal documents. - Sales and marketing AI

AI tools and AI-powered CDPs analyze customer interactions, generate summaries, and assist sales teams. - Software automation

SLMs can control interfaces, perform GUI navigation tasks, and automate digital workflows.

These use cases illustrate how small language models function as “executors” within AI systems, carrying out tasks efficiently and reliably.

Small Language Models in AI Agent Architectures

Modern AI systems increasingly use multi-model architectures, where different models perform different roles.

In these architectures:

- Large models provide reasoning and planning

- Small models execute specific tasks

This design allows organizations to combine the intelligence of large models with the efficiency of small models.

For example:

- A large model plans a workflow

- Smaller models execute each step

- The system orchestrates results

This approach dramatically improves scalability and cost efficiency for technical teams and entire organizations.

Benefits of Small Language Models for Businesses

For enterprises, the key question is not simply “how powerful is the model?” but “how efficiently can it deliver business outcomes?”

Enterprises adopting SLMs typically gain several strategic advantages, including:

Lower AI operating costs

Because small models require less computing power, companies can scale AI usage without runaway infrastructure costs.

Faster deployment

Smaller models are easier to deploy and update, enabling faster innovation cycles and results.

Improved data security

Organizations can run models in private infrastructure, keeping sensitive data internal.

Higher reliability for specialized tasks

SLMs trained on specific workflows often outperform general-purpose models in those tasks.

Better control over AI systems

Enterprises can customize models for the specific industries, regulations, or internal processes they need.

Limitations of Small Language Models

Despite their advantages, small language models also have limitations.

Reduced general knowledge

SLMs typically have less world knowledge than large frontier models.

Less advanced reasoning

Complex multi-step reasoning tasks may still favor large models.

Narrower capabilities

Small models are often optimized for specific tasks rather than broad intelligence.

Because of these tradeoffs, many organizations deploy hybrid AI architectures combining both large and small models to meet their needs.

Case Study of a Small Language Model: Enterprise Customer Service

At Uniphore, we deployed a RAFT-enhanced SLM for a Fortune 500 telecommunications company handling 50,000+ customer interactions daily.

| Before (LLM + RAG) | After (SLM + RAFT) | |

| Response latency | 2300 ms | 180 ms |

| Infrastructure cost | $180K/month | $15K/month |

| Accuracy | 82% for domain-specific queries | 96% for domain-specific queries |

| Hallucination rate | 12% | 1.2% |

How Small Language Models Improve Enterprise ROI

One of the biggest barriers to enterprise AI adoption is cost.

Large models can require massive compute resources, making widespread deployment expensive. Here’s where small language models come in.

By reducing infrastructure requirements and inference costs, SLMs allow companies to:

- Deploy AI across more workflows

- Automate more processes

- Scale AI without escalating cloud costs

This directly improves AI return on investment (ROI). When combined with secure infrastructure, orchestration layers, and enterprise governance, small language models enable organizations to move from AI experimentation to AI at scale.

The Future of Small Language Models

Industry trends suggest that SLMs will play an increasingly central role in enterprise AI.

Key developments include:

- Domain-specific models for industries

- Edge AI deployments

- AI agent ecosystems

- Sovereign AI infrastructure

- Task-specialized model training

As AI adoption accelerates, organizations are realizing that efficient models often deliver the best business outcomes.

For enterprise businesses, the question is shifting from: “Which model is biggest?” to “Which model delivers the most value per compute dollar?”

Not surprisingly, small language models are emerging as a powerful answer.

Contact Us

Want to learn how small language models can power secure, cost-efficient enterprise AI for your organization?

Uniphore’s Business AI Cloud helps enterprise organizations deploy private, composable AI architectures that combine large models, small language models, and intelligent agents to deliver real business outcomes.

Contact us to learn how your organization can deploy enterprise AI that is secure, scalable, and cost-efficient.

FAQ: Small Language Models

A small language model (SLM) is an AI model designed for natural language processing tasks that contains fewer parameters than traditional large language models. These models prioritize efficiency, lower cost, and specialized performance.

“Small LLMs” is an informal term commonly used in developer communities (such as Reddit and GitHub) to refer to small language models that use the same architecture as large language models but with fewer parameters.

Small language models typically range from hundreds of millions to tens of billions of parameters, while large language models may exceed hundreds of billions.

For general knowledge tasks, large models may perform better. However, for specialized enterprise workflows, small models can match or outperform larger models while using far less compute.

Yes. Many SLMs can run on private cloud, on-premise servers, or edge devices, making them ideal for organizations that require strict data privacy and security.

Industries that benefit most include:

• Financial services

• Healthcare

• Telecommunications

• Retail and e-commerce

• Government and public sector

These sectors often require secure, scalable AI deployments with strict data control.

Yes. Many modern AI architectures use small language models as task executors within AI agent systems, while larger models handle reasoning and planning.